Highlights:

- A novel AI technique called CoT-Valve can dynamically adjust the length of reasoning chains based on task difficulty.

- Reduces inference costs without significantly impacting performance.

- Achieves better efficiency than traditional prompt-based methods for tasks like math and coding.

- Uses a “valve-like” mechanism to control reasoning length with minimal model adjustments.

- Demonstrated success with QwQ-32B-Preview, reducing token usage by up to 70% with minimal accuracy loss.

TLDR:

Researchers at the National University of Singapore developed CoT-Valve, a technique that helps AI models think more efficiently by adjusting the length of their reasoning steps based on task complexity. This reduces computational costs while maintaining high accuracy, making AI systems more efficient and adaptable.

AI Models That Think More Efficiently: Introducing CoT-Valve

Imagine trying to solve a simple math problem like 100 – 28. You don’t need to go through a detailed explanation—just a quick calculation will do. But for a complex calculus equation, you might break it down step-by-step. Now, what if AI models could do the same? That’s precisely what researchers from the National University of Singapore have achieved with CoT-Valve, a novel technique that gives AI systems the ability to adjust the length of their reasoning chains based on task difficulty.

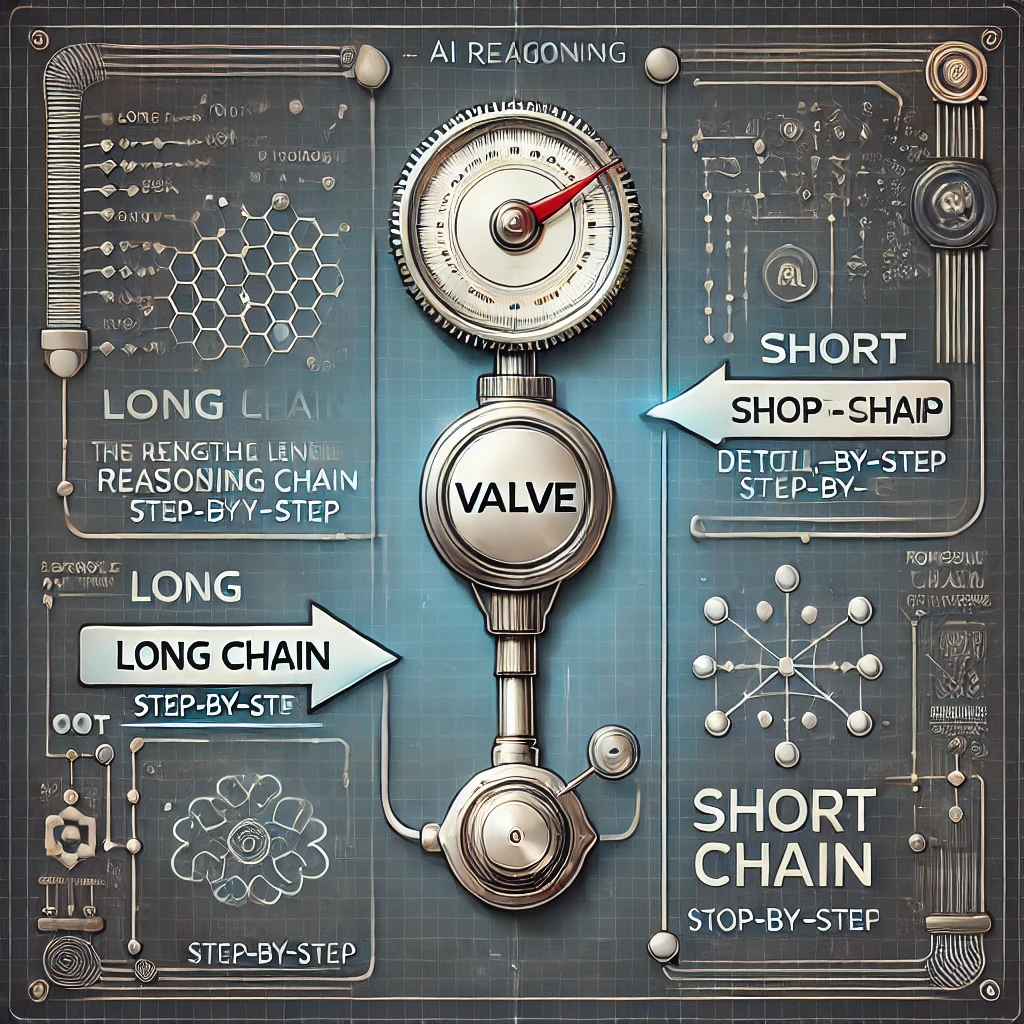

Traditionally, AI models use Chain-of-Thought (CoT) reasoning to improve performance by simulating human-like logical steps. However, this often results in unnecessarily long explanations for simple tasks, driving up computational costs. CoT-Valve solves this by acting like a “valve” to control the flow of these thought chains—long when needed, short when possible.

Why Chain-of-Thought Matters—but Not Always in Full Length

The Chain-of-Thought technique has been revolutionary for tasks like math word problems, coding, and logical reasoning. It helps models like OpenAI’s GPT series and Google’s Gemini think through complex problems in structured steps. However, this method often produces redundant or excessive reasoning steps.

The researchers observed that simpler tasks don’t need these exhaustive step-by-step processes. By introducing CoT-Valve, they enabled models to compress or expand their reasoning paths dynamically—without needing multiple specialized models. This approach significantly reduces inference costs while maintaining accuracy.

The Valve Mechanism: How It Works

CoT-Valve uses a method called LoRA (Low-Rank Adaptation) to introduce a “valve-like” control mechanism within the AI’s internal parameters. Think of it as turning a dial: a small turn results in longer reasoning paths for complex tasks, while a larger turn shortens the chains for simpler ones.

This control is achieved by manipulating a specific parameter direction in the AI model’s internal space. Adjusting this “valve” dynamically allows the model to fine-tune its thought process depending on the task’s complexity, much like a human adjusting their level of detail based on the question at hand.

Real-World Impact: Faster, Cheaper AI Reasoning

To test the effectiveness of CoT-Valve, the team applied it to the QwQ-32B-Preview, a state-of-the-art reasoning model. On the GSM8K math dataset, they reduced the average reasoning chain from 741 to 225 tokens—a nearly 70% reduction—while the model’s accuracy only dropped marginally from 95.07% to 94.92%.

The team also tested CoT-Valve on AIME, a challenging math benchmark. Here, they shortened the reasoning paths from 6827 to 4629 tokens, with just one additional incorrect answer. These results suggest that CoT-Valve not only saves computational resources but also retains the performance levels expected from leading AI models.

Beyond Math: Versatile Applications for Smarter AI

While math problems provided a useful testbed, the potential applications of CoT-Valve go much further. AI models for coding, scientific simulations, and even visual understanding could benefit from this technique. The ability to adjust reasoning complexity on-the-fly could make AI assistants more responsive and cost-efficient across a range of tasks.

Moreover, CoT-Valve introduces a dataset called MixChain, which contains paired long and short reasoning paths for the same questions. This dataset helps further refine the model’s ability to control reasoning length precisely.

The Bigger Picture: Efficiency in an AI-Driven World

As AI models grow larger, their computational demands increase exponentially. Innovations like CoT-Valve offer a promising way to curb these costs by optimizing when and how models think through problems. By adopting more adaptive reasoning methods, researchers could make AI tools more accessible, efficient, and sustainable.

Looking ahead, the team plans to explore even finer-grained control mechanisms. Future work might allow AI systems to selectively apply short or long reasoning paths to different parts of a task, further improving efficiency without sacrificing performance.